Project information

- Client: chist-era

- Project URL: https://www.chistera.eu/projects/soon

- Publication: https://ieeexplore.ieee.org/document/9569644

- Categories: Reinforcement Learning, Multi-Agents, Hyperparameter Optimization, Curriculum Learning

- Main technologies: Python, Ray RLlib, Stable-Baselines, SimPy, Ray Tune (Unity-ML Agents)

Summary

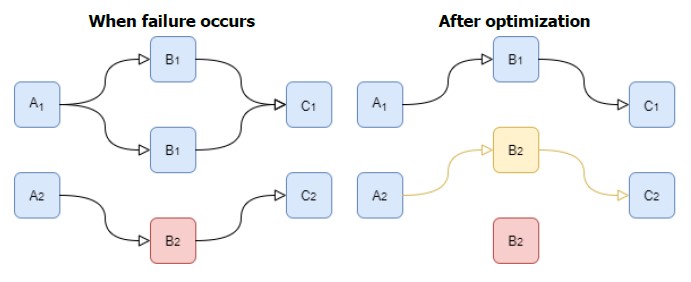

SOON-RL is a Reinforcement Learning (RL) project designed to optimize manufacturing workshop production within a multi-agent environment. The goal was to train an AI system to efficiently manage a sequence of specialized machines to fulfill production orders in the minimum amount of time, dynamically adapting to disruptions like machine failures.

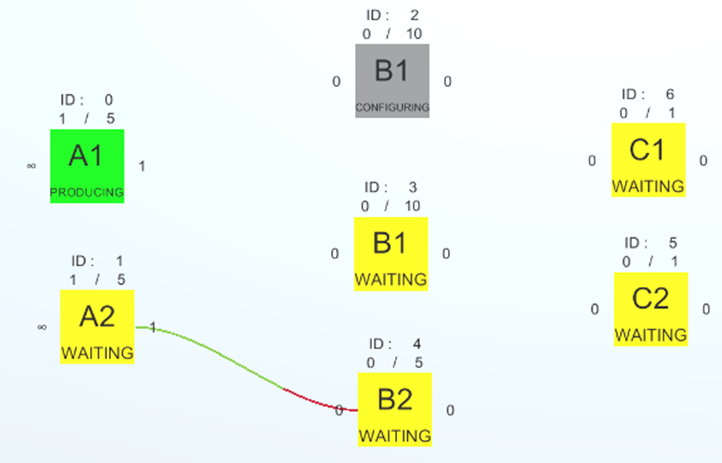

I developed a custom simulation environment using Python, Gym, and SimPy. To support complex multi-agent training, I transitioned the architecture from Stable Baselines to Ray RLlib, allowing multiple machines to learn collaboratively. By leveraging Ray Tune for rigorous hyperparameter and reward optimization, the final trained agents successfully learned to route production and reconfigure machines to achieve the absolute mathematical minimum number of production steps.

Technical Details & Scenarios

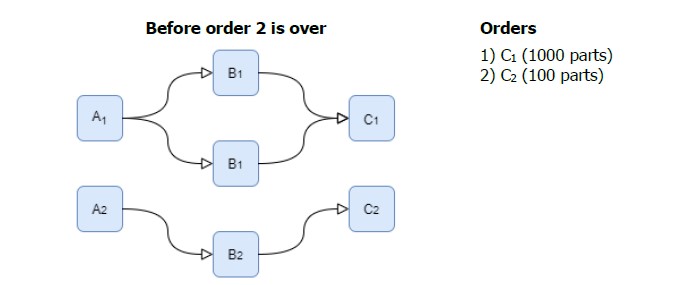

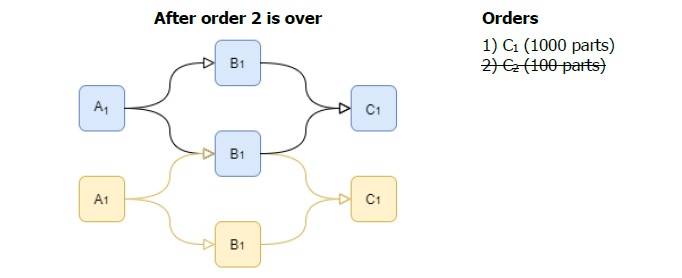

The core challenge was orchestrating a sequence of machines (e.g., producing part A1 → B1 → C1) that needed to work collaboratively. Each machine agent had to decide between four discrete actions at any given time: Wait, Reconfigure, Fetch Parts, or Produce, based on real-time storage levels, recipes, and the statuses of other machines.

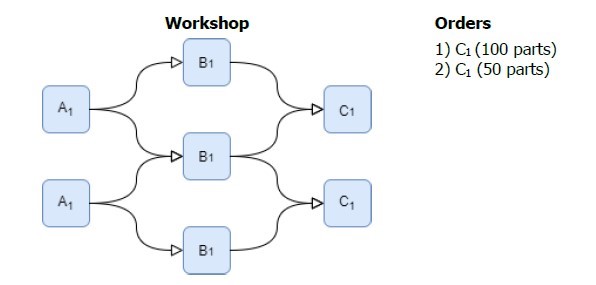

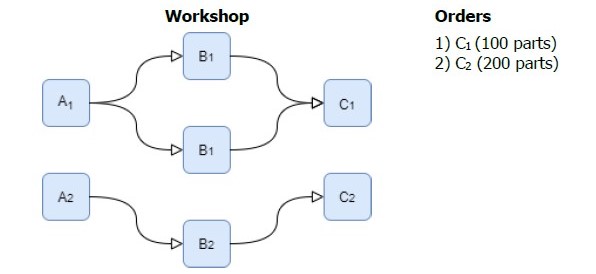

To ensure the RL agents were robust, they were trained and evaluated across three increasingly complex scenarios:

- Scenario 1 (Dynamic Reconfiguration): Fulfilling standard sequential orders, requiring agents to learn when to reconfigure themselves to produce a new type of part when order requirements changed.

Project Background & Evolution

This project originally began as my Bachelor's thesis, initially developed in C# using Unity and Unity ML-Agents with a custom UI for configuring workshop layouts. Because of the project's success, I was brought on as a Research Assistant at HE-Arc to continue its development, successfully migrating the framework to Python for deeper algorithmic control and advanced multi-agent scaling.